What is Data Engineering? A Comprehensive Guide for Modern Businesses

According to Gartner, poor data quality costs organizations an average of $12.9 million per year.

At the same time, global data volumes are growing at an unprecedented rate, driven by digital channels, connected devices, cloud applications, and AI initiatives.

Yet despite increased investments in analytics platforms, cloud infrastructure, and artificial intelligence, many enterprises still struggle to turn data into reliable, decision-ready insights.

Dashboards exist, but executives question the numbers. AI pilots launch, but models fail in production. Data lakes expand, yet business clarity does not.

This disconnect raises a fundamental question:

What is data engineering, and why is it critical to enterprise digital transformation?

Data engineering is the foundation that determines whether your analytics and AI initiatives deliver measurable business value or become expensive experiments. It ensures that raw data is collected, structured, validated, governed, and delivered in a way that business stakeholders can trust.

For enterprise IT leaders, CTOs, and digital transformation decision-makers, understanding what data engineering truly means is no longer a technical curiosity. It is a strategic necessity.

Without strong data engineering, digital transformation initiatives stall. With it, organizations unlock scalable analytics, reliable AI, operational intelligence, and long-term competitive advantage.

- What is Data Engineering?

- How Data Engineering Works: Core Components of a Modern Architecture

- Why Data Engineering is a Strategic Priority for Digital Transformation

- Common Challenges in Implementing Data Engineering

- Best Practices for Building a Modern Data Engineering Strategy

- Emerging Trends in Data Engineering

- Innovations and Tools Transforming Data Engineering

- Credencys: Empowering Your Data Engineering Initiatives

- Case Study: Global QSR Brand Data Modernization

- Case Study: Data-Driven Transformation for a Global Automotive Manufacturer

- TL;DR: What Enterprise Leaders Need to Know About Data Engineering

- Frequently Asked Questions (FAQs)

What is Data Engineering?

Data engineering is the discipline of designing, building, and maintaining systems that collect, process, store, and deliver data at scale for analytics, reporting, and AI.

In simpler terms, data engineering transforms raw, fragmented data into structured, reliable, and business-ready information.

But for enterprise leaders, the definition must go beyond technical components.

Data engineering is what ensures that:

- Your executive dashboards reflect accurate, reconciled numbers

- Your AI models are trained on clean, governed datasets

- Your cloud platform performs efficiently at scale

- Your teams trust the insights driving strategic decisions

Without data engineering, data remains scattered across systems, locked in silos, or riddled with inconsistencies.

The Evolution of Data Engineering

Data engineering has evolved significantly over the past decade.

In traditional environments, data workflows relied heavily on rigid ETL processes that moved data into centralized warehouses. These systems were designed primarily for historical reporting.

Modern enterprises, however, operate in a different landscape:

- Data volumes are exponentially larger

- Real-time insights are increasingly required

- Cloud platforms enable elastic scalability

- AI and machine learning depend on high-quality training data

As a result, data engineering has expanded beyond simple ETL. It now includes real-time streaming pipelines, cloud-native architectures, data observability, governance frameworks, and cost optimization strategies.

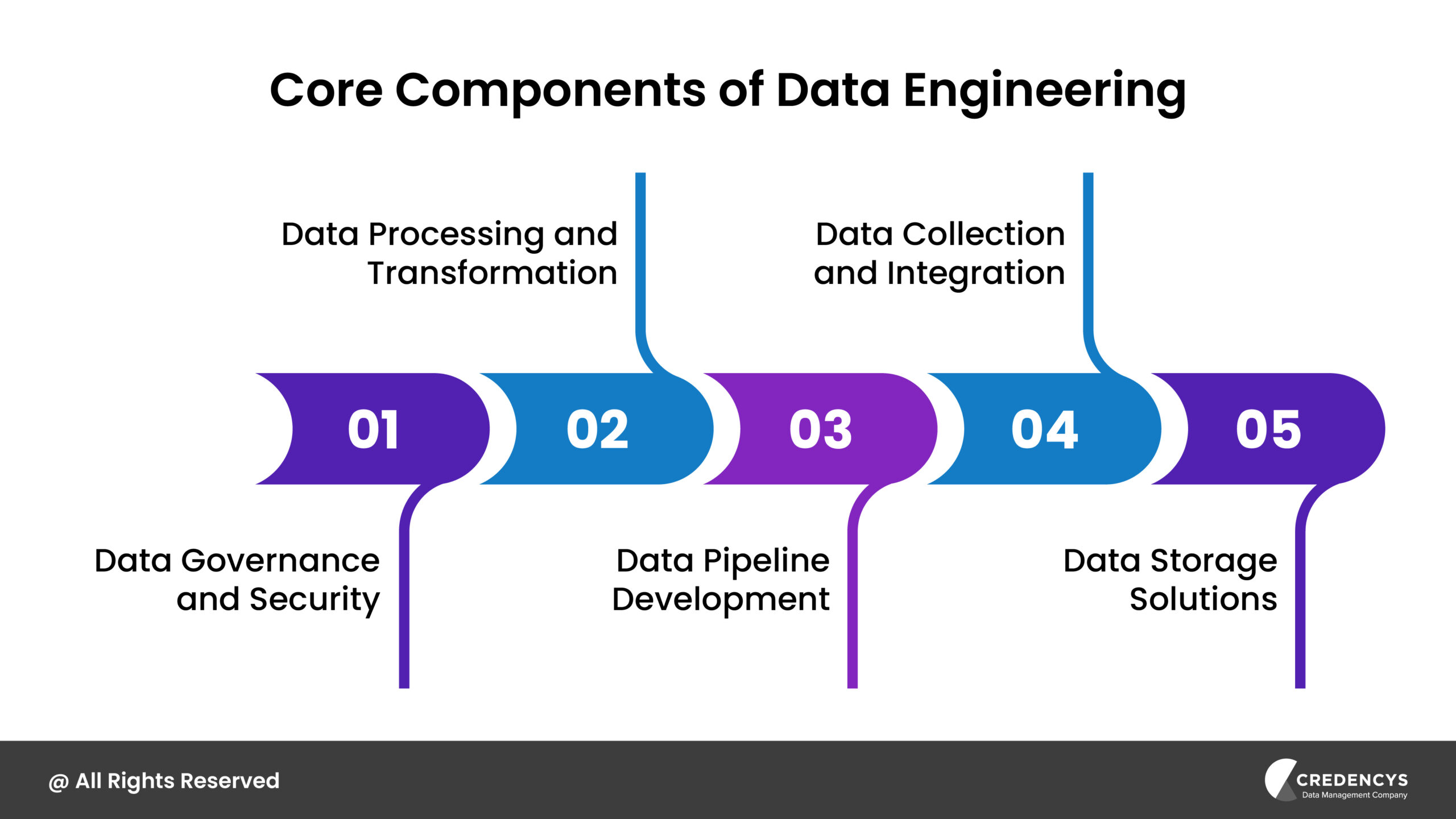

How Data Engineering Works: Core Components of a Modern Architecture

To fully answer the question, what is data engineering, it is important to understand how it functions within an enterprise environment.

Data engineering is not a single tool or platform. It is an ecosystem of processes, technologies, and architectural decisions that ensure data flows reliably from source systems to business applications.

Below are the core components of a modern data engineering framework.

1. Data Ingestion: Capturing Data from Multiple Sources

Enterprises generate data across ERP systems, CRM platforms, ecommerce applications, IoT devices, marketing tools, and third-party APIs.

Data ingestion is the process of collecting this information through:

- Batch processing

- Real-time streaming

- Event-driven architectures

A well-designed ingestion layer ensures:

- No data loss

- Schema consistency

- Secure transfer protocols

- Scalable processing capacity

Without structured ingestion, organizations struggle with incomplete or inconsistent datasets.

2. Data Pipelines and Transformation (ETL / ELT)

Once ingested, raw data must be transformed into usable formats. This involves:

- Cleaning and validating data

- Standardizing formats and schemas

- Enriching datasets with calculated fields

- Deduplicating records

- Applying business rules

Modern architectures often adopt ELT approaches in cloud environments, where transformation occurs after loading data into scalable storage systems.

Well-engineered pipelines reduce manual intervention, improve reliability, and accelerate insight generation.

3. Scalable Data Storage: Warehouse, Lake, or Lakehouse

Processed data must be stored in environments optimized for analytics and AI workloads. Enterprises typically leverage:

- Cloud data warehouses for structured reporting

- Data lakes for large volumes of raw or semi-structured data

- Lakehouse architectures combining both models

The storage layer must balance:

- Performance

- Cost efficiency

- Governance and access controls

- Scalability for growing data volumes

Architectural choices at this stage directly influence cloud costs and reporting speed.

4. Orchestration and Workflow Automation

Modern data systems rely on orchestration tools to manage pipeline execution, scheduling, monitoring, and error handling.

This includes:

- Automated job scheduling

- Dependency management

- Failure alerts and recovery mechanisms

- Version control

Without orchestration, pipelines become brittle and difficult to scale.

5. Governance, Quality, and Observability

Perhaps the most critical layer for enterprise environments is governance. This includes:

- Data quality checks

- Access control policies

- Lineage tracking

- Metadata management

- Audit trails for compliance

Governance ensures that data is not only accessible, but also trustworthy and secure. As organizations scale, observability becomes essential to proactively detect pipeline failures, schema drift, or performance bottlenecks.

Why Data Engineering is a Strategic Priority for Digital Transformation

For many enterprises, digital transformation begins with ambitious goals: AI-driven decision-making, real-time customer engagement, predictive analytics, and cloud modernization.

However, transformation does not succeed because of tools alone. It succeeds because of infrastructure.

This is where understanding what is data engineering becomes strategically important.

Data engineering is the foundation that determines whether transformation initiatives scale or stall.

1. It Builds Trust in Enterprise Data

Executives cannot act on insights they do not trust.

When revenue numbers differ across dashboards or customer counts vary between departments, decision-making slows down. Confidence erodes. Strategic alignment weakens.

A well-architected data engineering framework:

- Standardizes definitions and metrics

- Ensures consistent transformation logic

- Reduces duplication and reconciliation

- Maintains auditability and traceability

The result is a single, trusted source of truth that leadership can rely on.

2. It Accelerates AI and Advanced Analytics

AI initiatives often struggle not because of algorithms, but because of inconsistent, poorly prepared data.

Data engineering:

- Automates dataset preparation

- Maintains clean, version-controlled data

- Enables scalable model training

- Supports continuous data refresh cycles

When pipelines are reliable and governed, AI moves from experimental to enterprise-grade capability.

3. It Reduces Operational Inefficiencies

In many organizations, analytics teams spend significant time:

- Fixing broken pipelines

- Manually reconciling data

- Debugging integration issues

- Managing reactive incidents

This reactive environment limits innovation.

Strong data engineering reduces firefighting and frees teams to focus on optimization, automation, and business value creation.

4. It Controls Cloud Data Costs

Cloud adoption introduces flexibility, but it can also introduce unpredictability.

Without optimized pipelines and workload governance:

- Compute resources are over-provisioned

- Storage grows without lifecycle management

- Transformations run inefficiently

- Costs escalate without clear visibility

Data engineering ensures that scalability does not come at the expense of cost discipline.

5. It Enables Faster Time to Market

Modern enterprises increasingly launch data-driven products, embedded analytics features, and real-time services.

A scalable data engineering backbone:

- Delivers consistent APIs

- Ensures low-latency data access

- Supports secure multi-user environments

- Accelerates new feature rollouts

In competitive markets, speed and reliability directly influence revenue and customer satisfaction.

The Business Reality

Organizations that treat data engineering as a core strategic capability consistently outperform those that treat it as backend support.

When the foundation is strong:

- Analytics becomes proactive rather than reactive

- AI initiatives scale confidently

- Cloud investments deliver measurable ROI

- Leadership gains real-time visibility into performance

Common Challenges in Implementing Data Engineering

While the value of data engineering is clear, implementation in enterprise environments is rarely straightforward. Legacy systems, rapid digital expansion, regulatory pressure, and evolving business requirements create complexity that cannot be solved with tools alone.

Below are the most common challenges organizations face when building scalable data engineering capabilities.

1. Data Silos Across Business Units

As enterprises grow, so do their systems. Sales operates in one platform. Marketing in another. Finance relies on ERP. Operations uses separate supply chain tools. Over time, this creates:

- Conflicting data definitions

- Duplicate customer and product records

- Manual reconciliation efforts

- Delayed cross-functional insights

Without a unified integration and transformation strategy, analytics initiatives remain fragmented and inconsistent.

2. Poor Data Quality at Scale

Scaling data without enforcing quality controls leads to compounding issues. Common problems include:

- Incomplete or missing records

- Inconsistent formats

- Schema drift during system upgrades

- Duplicate or outdated entries

Poor data quality directly affects forecasting accuracy, personalization effectiveness, and executive reporting reliability.

Data engineering must embed validation, cleansing, and monitoring mechanisms into pipelines, not treat quality as an afterthought.

3. Legacy Architecture Limitations

Many enterprises still rely on rigid ETL scripts or on-premise infrastructure designed for smaller data volumes. As data grows:

- Pipelines become slow and unstable

- Maintenance overhead increases

- Innovation slows down

- Technical debt accumulates

Modern transformation initiatives require scalable, cloud-native architectures that can handle structured, semi-structured, and real-time data efficiently.

4. Escalating Cloud Costs

Cloud platforms provide elasticity, but without optimized workloads, costs can quickly spiral. Common inefficiencies include:

- Over-provisioned compute clusters

- Redundant data storage

- Inefficient transformation logic

- Lack of cost monitoring and attribution

Data engineering must include performance optimization and governance strategies to ensure sustainable scalability.

5. Talent Gaps and Operational Overload

Data engineering demands expertise in distributed systems, cloud architecture, orchestration tools, and governance frameworks. However, many organizations:

- Rely on limited in-house expertise

- Blur roles between analysts and engineers

- Underestimate long-term operational needs

This results in reactive firefighting instead of proactive innovation.

6. Security and Compliance Pressures

Data regulations are becoming more stringent across industries and geographies. Enterprises must ensure:

- Controlled access to sensitive data

- Lineage tracking and auditability

- Secure data transfers

- Compliance with regional data residency laws

Embedding security and governance into the data engineering lifecycle is essential to mitigate risk.

The Strategic Imperative

These challenges are not isolated technical issues. They directly affect revenue, customer experience, operational efficiency, and regulatory exposure.

Enterprises that address these obstacles with a structured, governance-first data engineering strategy are better positioned to scale analytics, AI, and digital products confidently.

Best Practices for Building a Modern Data Engineering Strategy

Understanding what is data engineering is only valuable if it translates into execution. For enterprise organizations, success depends on adopting a structured, scalable, and governance-first approach rather than isolated tooling decisions.

Below are proven best practices that help enterprises build resilient and future-ready data engineering foundations.

1. Start with Business Objectives, Not Tools

Data engineering should not begin with platform selection. It should begin with measurable business outcomes. Before architecting pipelines, leadership should define:

- Which KPIs require standardization across departments

- What reporting bottlenecks exist today

- Where AI or automation initiatives are planned

- Which compliance risks need mitigation

When architecture aligns with revenue growth, cost optimization, operational efficiency, or customer experience goals, data engineering becomes a value driver rather than a technical overhead.

2. Design for Scalability and Flexibility

Enterprise data ecosystems rarely remain static. Mergers, acquisitions, new digital products, and regional expansions introduce additional data sources. Modern data engineering architectures should:

- Separate storage and compute for flexibility

- Support distributed processing

- Handle structured, semi-structured, and unstructured data

- Enable modular pipeline development

Scalability ensures that future growth does not require expensive re-engineering efforts.

3. Embed Data Quality at Every Stage

Data quality must be proactive, not reactive. Best-in-class enterprises implement:

- Automated validation rules during ingestion

- Schema enforcement to prevent drift

- Deduplication logic at transformation layers

- Continuous anomaly detection

- Data quality scorecards for stakeholders

When quality checks are embedded directly into pipelines, analytics teams can focus on insights instead of data cleanup.

4. Establish Strong Governance and Metadata Management

Governance is not simply about access control. It is about clarity, traceability, and accountability.

A mature governance framework includes:

- Clear data ownership definitions

- Centralized business glossary

- Lineage tracking from source to dashboard

- Audit trails for regulatory compliance

- Role-based data access policies

Strong governance builds trust across departments and reduces compliance risk.

5. Implement Observability and Performance Monitoring

Data engineering environments must be measurable to remain reliable. Organizations should continuously monitor:

- Pipeline health and failure rates

- Data freshness and latency

- Transformation performance

- Infrastructure utilization

- Cost per workload or department

Observability transforms reactive incident management into proactive performance optimization.

6. Optimize Cloud Cost and Resource Efficiency

Cloud data platforms provide scalability, but without governance, costs escalate quickly. Enterprises should:

- Right-size compute clusters

- Implement auto-scaling and auto-termination policies

- Archive infrequently accessed data

- Eliminate redundant pipelines

- Monitor workload-level cost allocation

Cost optimization is not about reducing innovation. It is about ensuring sustainability.

7. Prioritize Automation and Reusability

Manual workflows slow down innovation and introduce human error. Modern data engineering emphasizes:

- Infrastructure as code

- Reusable transformation templates

- Automated CI/CD pipelines

- Standardized development frameworks

Automation increases reliability while reducing operational overhead.

8. Align Data Engineering with Security by Design

Security must be embedded into every layer of architecture. Best practices include:

- Encryption at rest and in transit

- Zero-trust access models

- Continuous vulnerability assessments

- Data masking for sensitive fields

- Policy-driven access governance

Security is not a compliance checkbox. It is foundational to enterprise credibility.

9. Invest in Skill Development and Organizational Alignment

Technology alone cannot deliver scalable data engineering. Enterprises should:

- Clearly define roles between data engineers, analysts, and scientists

- Encourage cross-functional collaboration

- Upskill internal teams on modern cloud and orchestration tools

- Establish standardized documentation practices

Organizational alignment ensures that technical architecture reflects business reality.

10. Build with Long-Term Evolution in Mind

Data ecosystems evolve alongside the business. A forward-looking data engineering strategy:

- Supports modular upgrades

- Enables experimentation without disrupting production

- Adapts to regulatory changes

- Incorporates emerging technologies like AI-driven pipeline optimization

Future readiness prevents stagnation and technical debt accumulation.

Emerging Trends in Data Engineering

Data engineering is undergoing significant transformations driven by technological advancements and evolving business needs. Key trends shaping the field include:

1. Focus on Data Governance and Security

With increasing data breaches and stringent regulations, robust data governance and security have become paramount. Implementing comprehensive policies, access controls, and compliance measures ensures data integrity and builds customer trust.

2. Real-Time Data Processing

The demand for immediate insights has made real-time data processing essential. Organizations are increasingly adopting stream processing frameworks to analyze data as it arrives, enabling timely decision-making and enhancing responsiveness to market dynamics.

3. Adoption of Data Mesh Architecture

To address challenges associated with centralized data management, organizations are embracing data mesh architectures. This decentralized approach assigns data ownership to specific domain-oriented teams, promoting scalability and aligning data management with business objectives.

What is Data Mesh? A Beginner’s Guide to Decentralized Data Management

4. Rise of Cloud-Native and Serverless Architectures

The migration to cloud-native and serverless architectures is accelerating. These models offer scalability, cost-effectiveness, and reduced infrastructure management overhead, allowing data engineers to focus on core functionalities like pipeline development and data modeling.

5. Utilization of Synthetic Data

To overcome challenges related to data scarcity and privacy, organizations are turning to synthetic data generation. This approach creates artificial datasets that mimic real data, facilitating AI model training and testing without exposing sensitive information.

6. Integration of Artificial Intelligence and Machine Learning

Data engineering is becoming more intertwined with AI and ML. Engineers are developing infrastructures that support the deployment and maintenance of AI models, facilitating automated data processing and predictive analytics. This integration streamlines operations and fosters innovation across various sectors.

7. Emphasis on Data Democratization

There’s a growing focus on making data accessible across all levels of an organization. Data democratization involves creating user-friendly tools and interfaces, enabling non-technical users to engage with data directly. This shift empowers a broader range of stakeholders to derive insights, fostering a data-driven culture.

These emerging trends highlight the dynamic and evolving nature of data engineering, underscoring its critical role in enabling organizations to harness data effectively for strategic advantage.

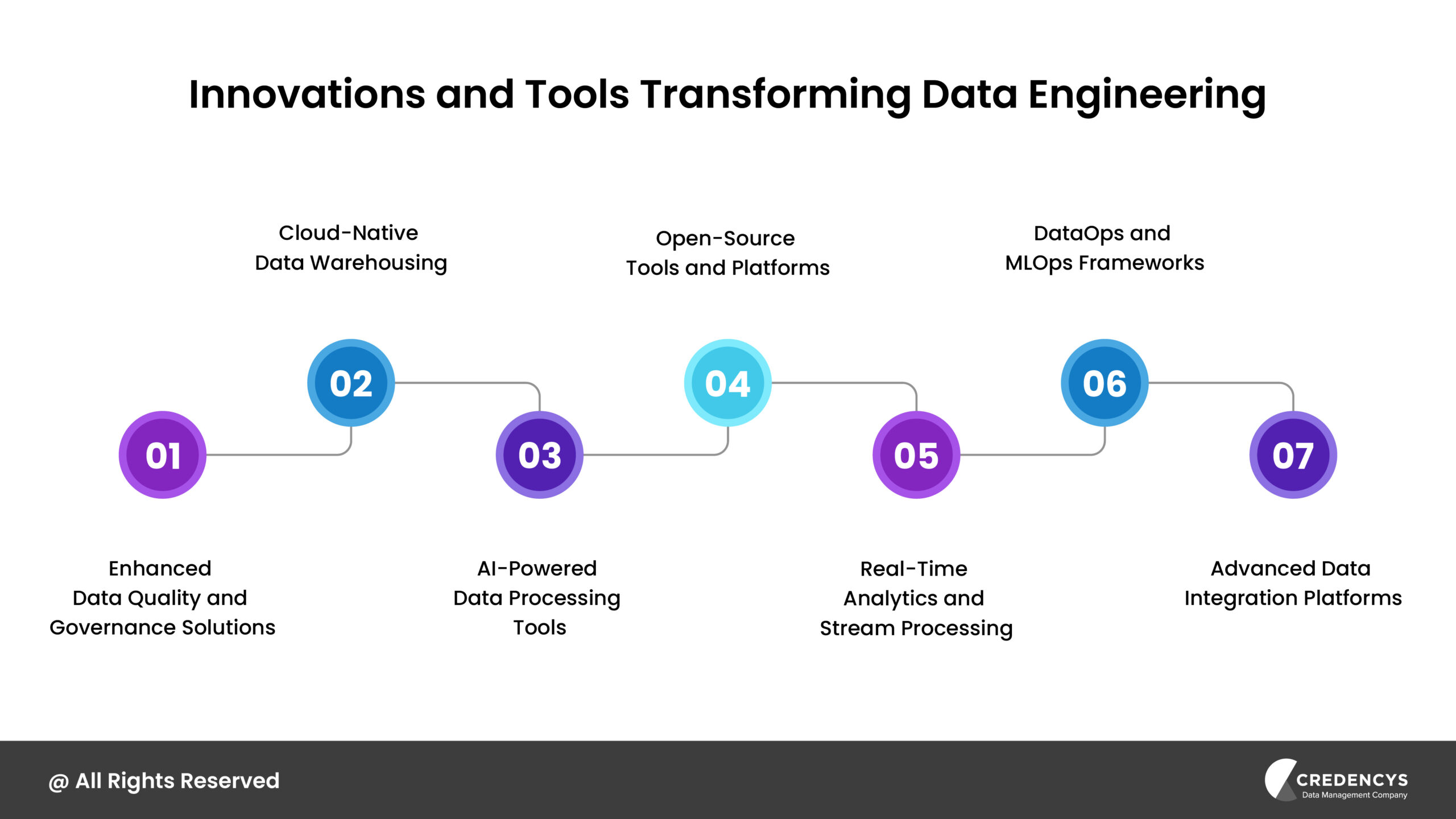

Innovations and Tools Transforming Data Engineering

The data engineering landscape is continually evolving, with new tools and innovations enhancing efficiency, scalability, and integration capabilities. As of 2025, several key developments are shaping the field:

1. Enhanced Data Quality and Governance Solutions

Ensuring data quality and compliance is paramount. Innovative tools now offer automated data validation, lineage tracking, and policy enforcement.

These solutions help maintain data integrity and ensure adherence to regulatory standards, reducing the risk of non-compliance and enhancing trust in data assets.

2. Cloud-Native Data Warehousing

The adoption of cloud-native data warehouses is on the rise, offering scalable storage and compute resources that can adjust to organizational needs. These platforms provide flexibility, cost-effectiveness, and integration with various data services, enabling efficient handling of large datasets and complex queries without significant infrastructure investments.

3. AI-Powered Data Processing Tools

Artificial Intelligence is increasingly embedded in data processing workflows. AI-driven tools automate tasks like data cleansing, anomaly detection, and predictive analytics, enhancing both speed and accuracy.

For instance, AI can identify patterns in data that may not be immediately apparent, providing deeper insights and facilitating proactive decision-making.

4. Open-Source Tools and Platforms

The open-source community continues to contribute a plethora of tools that address various aspects of data engineering. These tools offer flexibility and customization, allowing organizations to tailor solutions to their specific needs.

Engaging with open-source projects also fosters innovation and collaboration, keeping organizations at the forefront of technological advancements.

5. Real-Time Analytics and Stream Processing

The ability to process and analyze data in real-time is becoming a standard requirement. Tools that support stream processing enable organizations to monitor events as they happen, facilitating immediate responses to emerging trends or issues.

This capability is crucial for applications like fraud detection, network security, and dynamic pricing models.

6. DataOps and MLOps Frameworks

The integration of DataOps and MLOps practices is fostering collaboration between data engineering and data science teams. These frameworks emphasize automation, continuous integration, and delivery, ensuring that data pipelines and machine learning models are reliable, reproducible, and scalable.

This approach accelerates the deployment of data-driven applications and models, enhancing organizational agility.

7. Advanced Data Integration Platforms

Modern data integration platforms are becoming more sophisticated, enabling seamless connectivity between diverse data sources and destinations. These platforms support real-time data synchronization, ensuring that organizations can access up-to-date information across all systems.

Features such as automated schema mapping and AI-driven data transformation are reducing manual intervention, streamlining the integration process.

Incorporating these innovations and tools enables data engineers to build robust, efficient, and scalable data infrastructures. Staying abreast of these developments is essential for organizations aiming to leverage their data assets fully and maintain a competitive edge in the data-driven landscape.

Credencys: Empowering Your Data Engineering Initiatives

Credencys offers comprehensive data engineering services designed to transform raw data into actionable insights, driving informed decision-making and business growth. Our Data Engineering services include:

- Data Strategy and Consulting: We collaborate with you to develop tailored data strategies that align with your business objectives, ensuring a roadmap for success.

- Data Pipeline Development and Orchestration: Our experts design and implement robust data pipelines, facilitating seamless data flow across your enterprise systems.

- Data Management and Governance: We establish frameworks to maintain data integrity, security, and compliance, addressing challenges related to data quality and regulatory adherence.

- Advanced Analytics and Business Intelligence: Leveraging cutting-edge tools, we transform processed data into meaningful insights, empowering you to make data-driven decisions.

Our comprehensive services are designed to address your data challenges and drive your business forward.

If you are short on time, here is the executive summary.

Data engineering is the foundation of analytics and AI. It designs and maintains the systems that collect, transform, store, and deliver trusted data at scale.

Without strong data engineering, digital transformation initiatives stall. Dashboards become unreliable, AI models underperform, and cloud costs escalate.

Modern data engineering goes beyond ETL. It includes real-time pipelines, cloud-native architectures, governance frameworks, observability, and cost optimization.

Enterprise value is measurable. Organizations with mature data engineering capabilities experience faster time to insight, improved KPI alignment, and stronger AI ROI.

Strategy matters as much as technology. Aligning architecture with business objectives, embedding governance, and optimizing performance are critical for long-term scalability.

Whether you are modernizing legacy infrastructure, scaling AI initiatives, or improving reporting reliability, our team designs data engineering frameworks aligned with long-term business outcomes.

Explore our Data Engineering Services to see how we help organizations build reliable, future-ready data ecosystems.

Frequently Asked Questions (FAQs)

1. What is data engineering?

Data engineering is the process of designing and maintaining systems that collect, clean, transform, and deliver data for analytics and AI. It ensures raw data becomes structured, reliable, and ready for business decision-making at scale.

2. How is data engineering different from data science?

Data engineering focuses on building and managing data pipelines and infrastructure, while data science focuses on analyzing data and building predictive models. Data engineers create the foundation that data scientists rely on for accurate and scalable insights.

3. Why is data engineering important for digital transformation?

Data engineering enables trusted reporting, scalable AI initiatives, and real-time operational insights. Without strong data foundations, digital transformation efforts struggle due to inconsistent data, unreliable dashboards, and inefficient cloud environments.

4. What are the key components of a data engineering architecture?

A modern data engineering architecture typically includes data ingestion, transformation pipelines (ETL/ELT), scalable storage such as data warehouses or lakehouses, orchestration tools, and governance frameworks for quality and compliance.

5. How do you know if your organization needs stronger data engineering?

If your teams manually reconcile reports, struggle with inconsistent KPIs, face rising cloud costs, or experience delays in analytics and AI initiatives, it likely indicates gaps in your data engineering foundation.

Tags: