How Databricks Pricing Works: A Complete Cost Breakdown

Databricks has become one of the most powerful platforms for modern analytics, AI, and machine learning. Many organizations rely on it not just for data engineering but also for real-time analytics, experimentation, and production-grade AI workloads.

However, while Databricks delivers scale and flexibility, its pricing model often feels unclear to business and technology leaders alike. Costs don’t come from a single line item. Instead, they emerge from how teams design workloads, manage compute, and govern usage over time.

This blog explains how Databricks pricing works, what truly influences your costs, and how organizations can move from unpredictable bills to controlled, optimized spending.

How Does Databricks Charge?

Databricks uses a consumption-based pricing model, which means you pay for what you use rather than a fixed license fee. This approach gives teams freedom to scale up or down, but it also means costs can change rapidly if usage is not actively managed.

At the center of Databricks pricing are Databricks Units (DBUs). DBUs represent the amount of compute power consumed when Databricks workloads are running.

You are charged DBUs based on:

- The type of workload you run (SQL analytics, data engineering, machine learning, streaming)

- The compute configuration, including instance size and whether GPUs are used

- The time duration for which clusters remain active

This means even short-lived spikes in usage can impact your monthly bill if clusters are large or poorly controlled. Databricks is designed to be powerful and flexible, but it assumes teams are intentional about how and when they consume compute.

Understanding Databricks Pricing: What you Need to Know

To truly understand Databricks pricing, it helps to look beyond DBUs and break costs into three interconnected layers.

1. Compute Costs (Primary Cost Driver)

Compute is the most significant and most visible part of Databricks pricing. Different workloads consume DBUs at different rates:

- SQL analytics workloads scale with concurrency and query complexity

- Data engineering pipelines consume DBUs based on cluster size and job duration

- Machine learning workloads often run longer and use specialized hardware, making them more expensive

A common misconception is that faster clusters always reduce cost. In reality, oversized clusters often complete jobs faster but consume DBUs at a much higher rate, resulting in higher overall spend.

2. Cloud Infrastructure Costs

Databricks runs on your cloud provider, which means you also pay for the underlying infrastructure separately. These costs typically include:

- Virtual machine instances

- Object storage

- Network traffic between services

Because these charges appear on cloud invoices rather than Databricks bills, organizations often underestimate the true cost of running Databricks workloads at scale.

3. Operational and Process-Driven Costs

Not all costs are technical. Many arise from how teams operate:

- Clusters left running outside business hours

- Multiple teams solving the same problem independently

- Experimental workloads that quietly move into production

- Lack of cost ownership across departments

These inefficiencies rarely appear in architectural diagrams, but they significantly affect long-term spend.

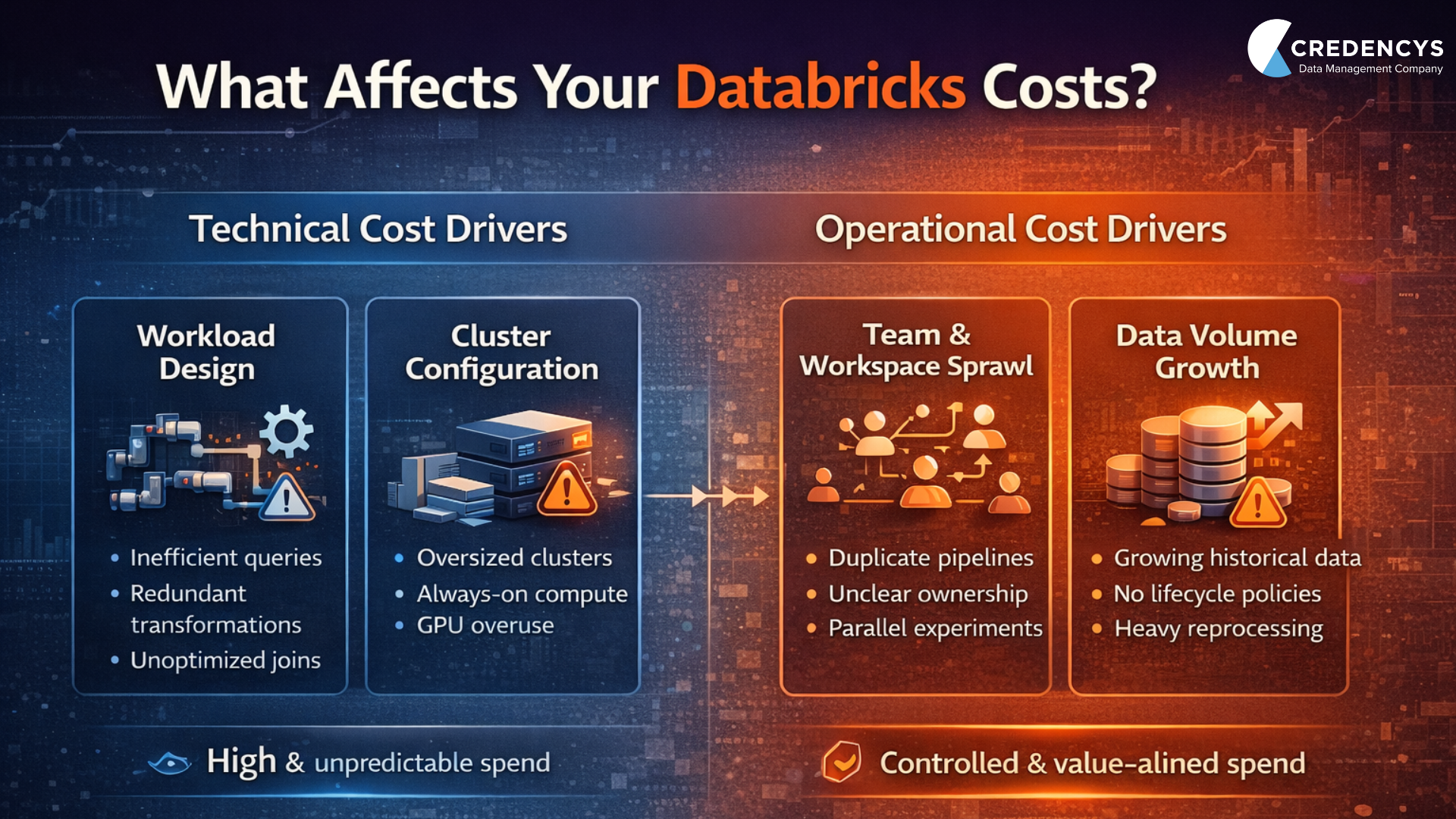

What Affects Your Databricks Costs?

Databricks costs are shaped as much by people and processes as by technology. The most significant cost drivers often sit outside the platform itself.

1. Workload Design and Optimization

Inefficient transformations, redundant data processing, and poorly written queries consume far more compute than necessary. Over time, these small inefficiencies multiply, especially as data volumes grow.

Teams that regularly review and refactor workloads tend to see lower costs without sacrificing performance.

2. Cluster Lifecycle Management

Clusters that remain active when no jobs are running are among the most common causes of cost overruns. Without auto-termination, right-sizing, and scheduling, DBUs continue to accumulate with little business value.

3. Organizational Sprawl

As Databricks adoption spreads across teams, costs rise quickly without governance. When multiple teams run similar pipelines or experiment in isolation, compute usage grows faster than outcomes.

4. Data Growth and Retention

Data rarely shrinks. As historical data accumulates, processing windows widen and storage costs increase. Without tiered storage and lifecycle policies, organizations pay premium compute prices for low-value workloads.

Accurately Collect, Understand, and Optimize your Databricks Costs with Credencys

Understanding Databricks pricing is only the first step. The real challenge is connecting costs to business value and controlling them without slowing innovation.

This is where Credencys, Databricks’ Consulting Partner, helps organizations bring structure and clarity to their Databricks investments.

How Credencys Adds Value

Credencys works closely with data, platform, and finance teams to:

- Map Databricks usage to real business outcomes

- Identify idle clusters, inefficient workloads, and duplicate pipelines

- Redesign architectures for cost-efficiency at scale

- Implement usage visibility and internal chargeback models

- Optimize performance while reducing unnecessary DBU consumption

Rather than treating Databricks costs as an unavoidable expense, Credencys helps teams manage it as a measurable, optimizable investment.

The Business Impact

Organizations working with Credencys typically gain:

- Predictable and explainable Databricks spend

- Reduced waste without compromising performance

- Faster insights through better workload design

- Stronger alignment between engineering, data, and finance teams

Databricks remains a powerful platform. Credencys ensures it remains financially sustainable as well.

Wrapping Up

Databricks pricing is shaped less by the platform itself and more by how intentionally it is used. The same setup can feel expensive or efficient depending on workload design, governance, and visibility into usage.

The organizations that succeed with Databricks don’t focus on reducing costs in isolation. They focus on aligning spend with value. They understand which workloads matter, where experimentation is justified, and where guardrails are needed to prevent waste.

As data volumes and AI use cases continue to grow, Databricks will remain central to enterprise analytics strategies. The real differentiator will be whether teams have the clarity and discipline to scale it responsibly.

When managed well, Databricks becomes more than a robust data platform. It becomes a predictable, sustainable foundation for innovation and growth.

Frequently Asked Questions (FAQs)

1. How does Databricks pricing work?

Databricks pricing is based on compute usage measured in Databricks Units (DBUs), along with underlying cloud infrastructure costs, including storage and networking.

2. What impacts Databricks costs the most?

The biggest drivers are cluster size, workload type, runtime duration, and governance gaps, such as idle clusters and duplicate pipelines.

3. Is Databricks expensive?

Databricks is not inherently expensive, but costs can grow quickly without workload optimization, visibility, and usage controls.

Tags: