Boost your Quality Assurance with Agile Testing

On July 1st, India rolled out its much-hyped goods and services tax (GST). However, the software development community was particularly amused by a report published in a newspaper. Quoting Navin Kumar, the chairman of the GSTN (the network system which will support the nationwide rollout), the report said – “there is no time to do beta testing…it (software) will stabilize over three-four months from the GST introduction.”

Here’s his statement in verbatim:

“Nowhere in the world does the hardening of software take place before the roll-out. We would have loved to have a couple of months more before the roll-out. When you are about to deploy the software, there is a code freeze when code writing stops. In the next 10 days you do the testing. There is no time for that now. It takes three-four months for stabilization to happen.”

We can only hope that Mr. Kumar and his team have taken lessons from the failure of the “Obamacare” website in 2013.

Apparently, quality testing remains to be on the back burner for teams across the globe. While developers have become accustomed to the Agile software development practices, software testing teams are still facing issues. That’s why we often hear complaints about –

Testing being pushed so late in the sprint that there is no time to review, fix and retest the defects

Or

Not having enough time to go through functional, integration, regression, usability, security and other testing

This is despite the fact that Agile by definition makes Quality an uncompromisable entity. In Agile software projects, teams are expected to deliver a functioning, defect-free, potentially shippable product increment in every Sprint. Yet quality assurance is not an easy task. It might require the adoption of different approaches to testing to accomplish a superior quality code. This means that depending on the requirement, teams have to write different kinds of test cases as described below:

Functionality Test Cases

A type of black-box testing that checks if the application’s interface is working with the rest of the system and its users.

Performance Test Cases

These test cases check the responsiveness of the application under various loads. In large applications, performance tests are usually automated.

Integration Test Cases

An application consists of multiple software components, coded by different programmers.

The purpose of integration tests is to check that when all these components are put together, they function as per the expectation.

User Interface Test Cases

As the name suggests these test cases check the look and feel of the interface, design inconsistencies, typography, grammar, spellings etc. It usually also involves cross-browser testing.

Usability Test Cases

Usability testing evaluates the ease of application usage. As a test, users who may not have any prior knowledge of the application, are given certain tasks to perform. This helps in identifying issues from the first-time user perspective.

Database Test Cases

Database tests are performed to check if a developer has written the application code ensuring proper data storage and secure handling of data between the source and the destination database.

Security Test Cases

In the age of high-profile data breaches, application security testing is becoming a specialized endeavor and organizations sometimes maintain a full-time team just for the purpose. According to Open Source Security Testing Manual, Security Testing consists of seven major test areas:

- Vulnerability Testing

- Security Scanning

- Penetration Scanning

- Risk Assessment

- Security Auditing

- Posture Assessment

- Ethical Hacking

Build a POC/Prototype/MVP

In addition to above test cases, quality assurance in Agile involves “User Acceptance Criteria” and “Definition of Done”.

User Acceptance Criteria

In Agile testing, User stories are incomplete without their Acceptance Criteria (AC). AC are critical documentation bits which help developers in a team to write accurate test cases without any ambiguity and understand business values better. Although, the Product Owner writes AC, Quality Analysts and Developers also contribute to improving the AC.

Standard Acceptance Criteria Format (derived from Gherkin)

Given <precondition(s)> When <some action> Then <a result/set of results>

Example: Given the user hasn’t ordered yet, when the user adds any apparel into the shopping cart, then apply a discount of 20% to the total

AC ensure that all the parameters of a User Story are met as per every stakeholders’ agreement. As these test cases are also used by the end-user or client, they together constitute an important phase of testing before going into production. Also, only when the AC are “Accepted” the user story is marked as “Done”.

Definition of Done (DoD)

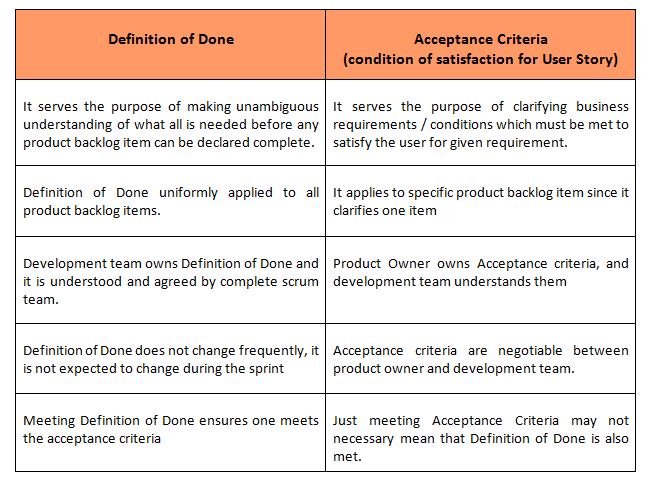

It’s the final piece of document in Agile testing used by cross-functional teams to evaluate the completeness of a business requirement. DoD is often confused with AC; in a discussion on StackExchange, an Agile practitioner writes:

“In my opinion, there is no difference. Definition of done and acceptance criteria are used interchangeably. You cannot meet the definition of done without all criteria being met and you cannot be not done if all criteria have been met. If you find yourself in the latter, then you simply have two sets of criteria for some unknown reason”

Another user in a blog on Scrum Alliance writes:

“…both the DoD and user story acceptance criteria are musts (and different). The Definition of Done (DoD) is a clear and concise list of requirements that the user story must satisfy for the team to call it complete. The DoD must apply to all items in the backlog. It can be considered a contract between the Scrum team and the product owner.”

If you have used AC or DoD, but not both, here’s a comparison which can help you understand their difference and significance:

Quality Assurance in Agile software projects depends on how well the team is collaborating. QAs have to be an integral part of the team and should be engaged throughout the sprint employing TDD/BDD approaches. This means the tests as described above, should be performed in parallel. While QAs can stick to black-box testing, developers should complete white-box tests. Further, your teams will need better project management tools for realistic sprint planning with accurate effort estimation, and user story prioritization to deliver quality builds in the committed time. Also, Automation will improve the reliability and speed up Agile testing to a great extent.

Credencys Credencys Solutions Inc is a leading mobile applications development company and solutions provider which has helped numerous businesses in their business growth. If you wish to develop a POC/Prototype/MVP, we can help you out with our Blueprint workshop.

Build a POC/Prototype/MVP in a 15-day workshop

Comments are closed.