Databricks Lakehouse Migration: Best Practices & Key Considerations

As data-driven strategies continue to dominate enterprise roadmaps, the need for a unified data architecture has become more pressing than ever.

According to IDC, global data volume is expected to reach 175 zettabytes by 2025, up from just 33 zettabytes in 2018.

This explosive growth underscores the urgent need for scalable, cost-effective, and high-performance data platforms.

Databricks Lakehouse Platform is a powerful fusion of data lakes and data warehouses that promises scalability, flexibility, and cost-efficiency.

However, migrating to a Lakehouse architecture is not a plug-and-play task. It requires thoughtful planning, robust execution, and post-migration optimization to realize its full value. In this blog, we walk you through Databricks Lakehouse migration best practices and key considerations that can help you navigate this transformation seamlessly.

Why Migrate to the Databricks Lakehouse?

The Databricks Lakehouse combines the best elements of data lakes and data warehouses into a single, unified architecture. Traditional data architectures often force organizations to choose between flexibility and performance, or between raw data access and governed analytics. The Lakehouse model eliminates this trade-off.

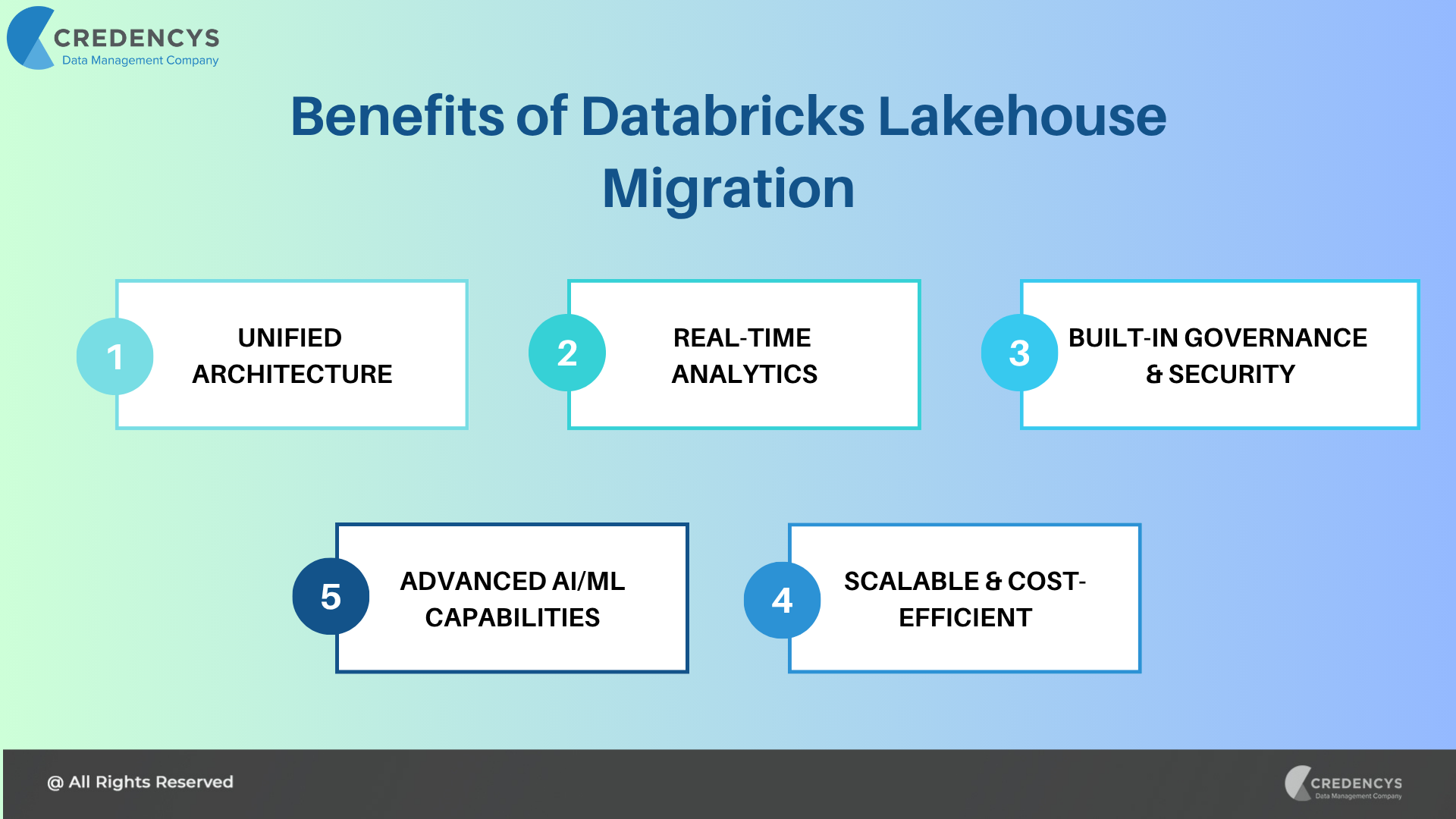

Here’s why businesses are increasingly moving their data infrastructure to Databricks Lakehouse:

1. Unified Data Architecture

The Lakehouse consolidates data warehousing and data lake capabilities into a single platform. This eliminates the need for complex data movement and duplication between systems, reducing architectural complexity and operational overhead.

2. Performance at Scale

Built on Apache Spark and optimized with Databricks’ Photon engine, the Lakehouse offers lightning-fast performance for both batch and streaming workloads. Organizations can process petabytes of data efficiently while meeting the demands of real-time analytics.

3. Native Support for AI & ML Workloads

Unlike traditional warehouses, Databricks is purpose-built for machine learning and advanced analytics. With integrated tools like MLflow and seamless integration with popular ML libraries (e.g., TensorFlow, PyTorch, scikit-learn), data scientists and engineers can collaborate within a unified environment.

4. Open Standards and Interoperability

The Lakehouse uses open-source standards like Delta Lake, Parquet, and Apache Spark. This ensures vendor neutrality and makes it easier to integrate with other tools and ecosystems without data lock-in.

5. Cost-Effective Storage and Compute

With the separation of storage and compute, you only pay for what you use. Organizations can scale resources elastically, optimizing performance without incurring unnecessary costs.

6. Robust Data Governance and Security

Using Unity Catalog, organizations can apply consistent data access policies across all workloads, ensuring data privacy and compliance with relevant regulations. Fine-grained access controls, lineage tracking, and audit logging are all built in.

7. Real-Time and Batch Analytics in One Place

Traditional systems often require separate infrastructures for streaming and batch processing. The Lakehouse supports both paradigms natively, allowing for seamless integration of real-time insights with historical context.

8. Accelerated Time-to-Insight

With a simplified architecture and a collaborative workspace for data engineers, analysts, and scientists, teams can reduce the time spent on integration and infrastructure, focusing more on delivering business value.

Key Considerations Before Migration

Before you embark on migrating to the Databricks Lakehouse, it’s crucial to evaluate your current data ecosystem, business goals, and technical requirements. Rushing into migration without a clear roadmap can result in inefficiencies, cost overruns, or performance issues. Here are the key factors you must consider:

1. Assess Your Current Data Landscape

Start with a complete audit of your existing data systems.

- Data Sources: Catalog all current data sources—structured databases (e.g., Oracle, SQL Server), semi-structured data (e.g., JSON, XML), and unstructured content (e.g., logs, images).

- Data Volume & Velocity: Understand the size of your datasets and the rate at which new data is generated or ingested.

- Workload Types: Identify how data is currently used. Are you mainly running batch analytics? Real-time processing? BI dashboards? Machine learning?

- Technical Debt: Consider whether your current architecture includes legacy systems or redundant processes that may complicate migration.

2. Define Migration Objectives

Clear objectives help align teams and measure success post-migration.

- Cost Optimization: Are you trying to reduce infrastructure, licensing, or operational costs?

- Improved Performance: Do you need faster query response times, better throughput, or scalable compute?

- Data Democratization: Are you aiming to give wider access to data across teams while maintaining governance?

- AI/ML Enablement: Are your data scientists hindered by a lack of access to high-quality, clean data for experimentation?

- Real-Time Insights: Do you want to switch from batch to near-real-time decision-making?

Document these goals and rank them by business impact.

3. Understand Data Governance Requirements

Moving to the Lakehouse must not compromise on compliance, security, or control.

- Regulatory Compliance: Ensure the platform supports your compliance needs—GDPR, HIPAA, CCPA, SOC 2, etc.

- Data Lineage and Auditing: Can you track where your data originated, how it’s been transformed, and who accessed it?

- Access Controls: Plan role-based access to datasets using Unity Catalog. Define how departments, teams, and individuals will access data.

- Data Masking & Encryption: Understand how sensitive data will be protected at rest and in transit.

A robust governance strategy ensures that agility does not come at the cost of security.

4. Identify Stakeholders and Build a Cross-Functional Team

Successful migrations require collaboration across departments.

- Executive Sponsors: Ensure leadership buy-in to secure budget and support.

- Data Engineering Team: They’ll handle migration logic, pipeline development, and performance tuning.

- Data Analysts & Scientists: Their input is essential to define how data should be structured and accessed post-migration.

- IT & Security: Involved in access provisioning, identity federation, and compliance enforcement.

- Business Units: End users who will validate whether the migration supports reporting, analytics, and decision-making.

Engage them early and continuously to align expectations and minimize resistance.

5. Estimate Downtime and Migration Effort

Understanding the migration effort helps manage risks and timelines.

- Lift-and-Shift vs. Redesign: Will you migrate existing systems as-is or re-architect for the Lakehouse model?

- Data Volume and Complexity: Large or fragmented datasets require more planning and testing to ensure optimal performance.

- Downtime Tolerance: Can your business tolerate temporary data access disruptions? If not, plan for a parallel-run strategy.

- Migration Tools: Evaluate Databricks migration accelerators, partner tools, and native connectors for your source systems.

Create a detailed timeline with phases: assessment, pilot, complete migration, testing, and optimization.

6. Plan for Cost Implications and Budgeting

Although Databricks offers cost efficiency through autoscaling and decoupled compute and storage, migration still has financial implications.

- License Costs: Evaluate pricing models for Databricks (jobs, interactive clusters, Delta Live Tables).

- Data Egress Charges: Moving data out of existing cloud or on-prem systems can incur network costs.

- Storage Format Optimization: Plan to convert data to open formats, such as Parquet or Delta, to maximize savings.

- Training & Enablement: Allocate budget for upskilling teams on Spark, Delta Lake, Unity Catalog, and Databricks tools.

Run a Total Cost of Ownership (TCO) analysis to make the business case stronger.

7. Evaluate Integration with Existing Tools & Ecosystem

Ensure that your current and future toolchains integrate smoothly with Databricks.

- BI Tools: Validate compatibility with tools like Power BI, Tableau, Looker, and ThoughtSpot.

- ETL/ELT Tools: Determine if existing ETL processes (e.g., Informatica, Talend, Fivetran, dbt) are supported or need to be rebuilt.

- Version Control & CI/CD: Plan integration with Git, Azure DevOps, Jenkins, or GitHub Actions for data pipeline deployments.

- Data Catalogs & Metadata Tools: Check if Databricks integrates with existing cataloging tools or if Unity Catalog will replace them.

Strong interoperability minimizes disruption and accelerates value realization.

Best Practices for a Successful Databricks Lakehouse Migration

1. Start with a Comprehensive Assessment

Evaluate your current data landscape, including sources, volumes, usage, and limitations. Understand what needs to be migrated and what can be retired. Define the business goals driving this migration to guide all planning and execution efforts.

2. Align on a Clear Migration Strategy

Choose the right approach—lift-and-shift, re-platform, or re-architecture—based on your goals and system complexity. Plan phased execution (discovery, pilot, complete migration), and prioritize high-impact use cases to build momentum.

3. Ensure Strong Data Governance and Security

Implement governance early using tools like Unity Catalog. Define access controls, track data lineage, and enforce compliance policies. Secure sensitive data through encryption, masking, and regular audits.

4. Enable Cross-Functional Collaboration

Engage all key stakeholders—IT, data teams, business users—from day one. Clearly define roles, foster collaboration, and validate progress with end users to ensure the migration supports real-world needs.

5. Optimize Workloads and Data Models

Don’t just move data—refactor it. Convert to Delta Lake, improve partitioning and indexing, and streamline pipelines with tools like Delta Live Tables. This maximizes performance, reliability, and cost-efficiency.

6. Test, Validate, and Monitor

Thoroughly test for data integrity, performance, and user experience. Utilize staging environments to simulate workloads and continuously monitor post-migration activities to detect and resolve issues promptly.

7. Upskill Teams and Promote Adoption

Train teams on Spark, Delta Lake, and Databricks tools to unlock the full value of the platform. Foster knowledge sharing and create internal champions to drive adoption and long-term success.

Need Help with Your Lakehouse Migration?

Migrating to Databricks is a high-stakes transformation, and having the right partner can make all the difference. At Credencys, we specialize in end-to-end Databricks Lakehouse migrations, helping enterprises modernize their data architecture with minimal risk and maximum impact. From strategy and assessment to execution and optimization, our certified Databricks experts ensure a seamless, future-ready transition.

Wrapping Up

Migrating to the Databricks Lakehouse Platform can unlock transformative value for your business. From real-time analytics to scalable machine learning, the platform positions you to thrive in a data-first world. But success depends on careful planning, execution, and governance.

By following these best practices and keeping critical considerations in mind, you can ensure a migration that’s not just smooth but strategic. Connect with our certified Databricks experts for a free assessment.

Tags: