Data Orchestration Tools in 2026: The Ultimate Buyer’s Guide

In 2026, data ecosystems are more complex than ever. Enterprises are managing data across multiple cloud platforms, SaaS tools, real-time streams, and distributed architectures like lakehouses and data mesh.

As a result, data pipelines have become highly interconnected, making them harder to manage, monitor, and scale. Without a structured orchestration layer, organizations often face:

- Pipeline failures and broken dependencies

- Delayed or inconsistent data delivery

- Limited visibility into pipeline performance

- Increased operational burden on data teams

This is where data orchestration tools play a critical role. They act as the control layer that automates, schedules, and monitors data workflows, ensuring seamless data movement across the entire stack.

As businesses increasingly rely on real-time analytics and AI-driven decisions, data orchestration is essential for building reliable, scalable, and efficient data pipelines. Let’s start by understanding what data orchestration really means.

What Is Data Orchestration?

Data orchestration is the process of automatically managing, coordinating, and monitoring data workflows across multiple systems. It ensures that data moves seamlessly through the stages of ingestion, transformation, validation, and delivery without manual intervention.

At its core, data orchestration connects various tools in your data ecosystem and ensures they work together in the right sequence, at the right time, with the right dependencies.

Key Capabilities of Data Orchestration Tools

Modern data orchestration tools provide several critical capabilities:

- Workflow Scheduling: Automate when pipelines run (batch, event-driven, or real-time)

- Dependency Management: Ensure tasks execute in the correct order

- Monitoring & Alerting: Track pipeline health and get notified of failures

- Error Handling & Retries: Automatically recover from failures

- Scalability: Handle growing data volumes and complex workflows

- Integration: Connect seamlessly with tools like data warehouses, lakes, APIs, and ML platforms

By bringing all these capabilities together, data orchestration tools act as the backbone of modern data operations, ensuring that data is always available, reliable, and ready for analysis.

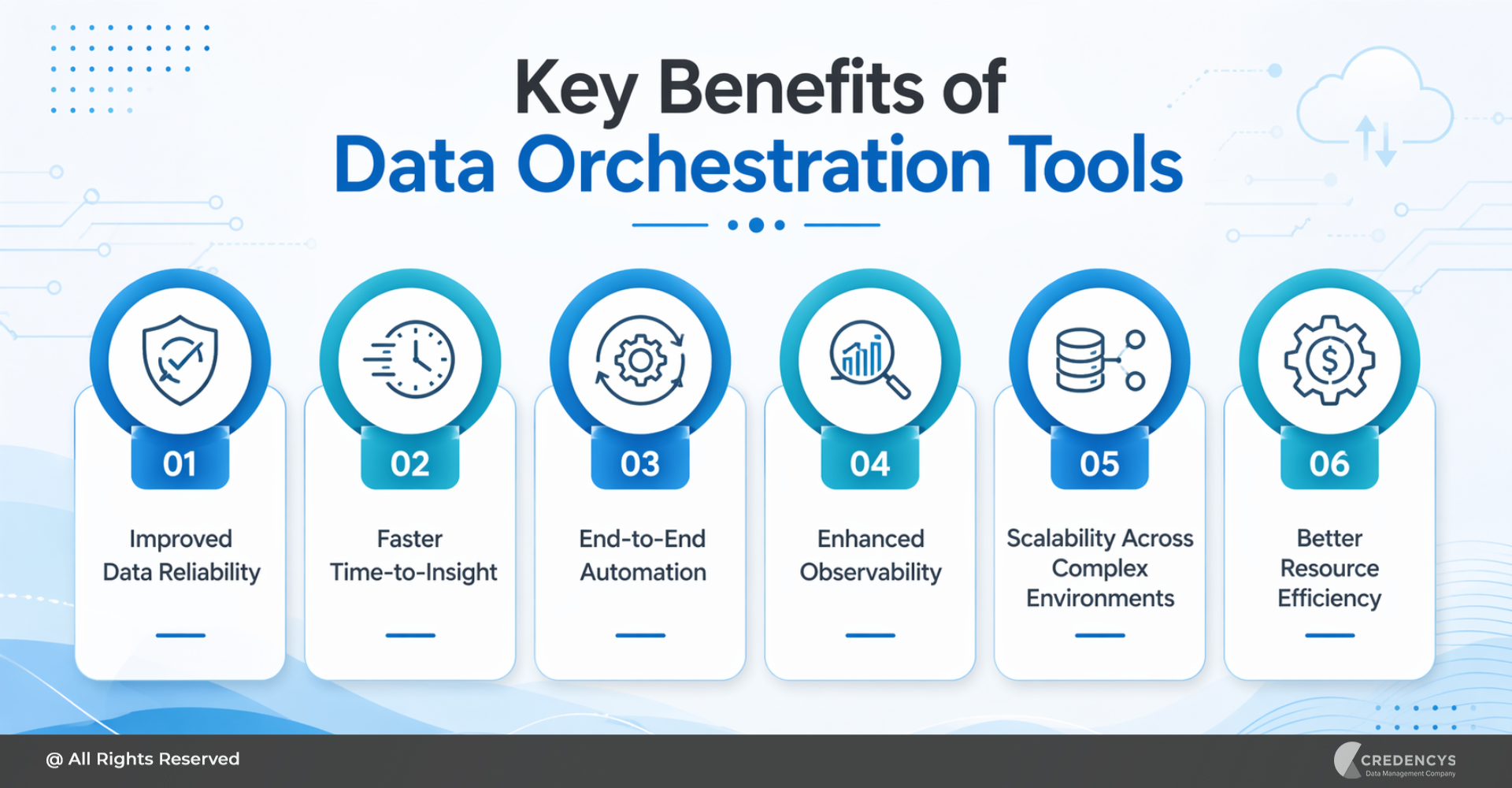

Key Benefits of Data Orchestration Tools

Implementing the right data orchestration tools can significantly improve how organizations manage and scale their data operations. Beyond automation, these tools bring structure, reliability, and efficiency to complex data pipelines.

1. Improved Data Reliability

Orchestration tools ensure workflows run in the correct sequence and manage dependencies properly. This reduces pipeline failures and ensures consistent, high-quality data outputs.

2. Faster Time-to-Insight

By automating data workflows, organizations can deliver data to analytics platforms and dashboards faster, enabling quicker, data-driven decision-making.

3. End-to-End Automation

Manual intervention is minimized with automated scheduling, execution, and error handling. This allows data teams to focus on innovation rather than routine pipeline management.

4. Enhanced Observability

Modern orchestration platforms provide visibility into pipeline performance, logs, and failures. This makes it easier to monitor workflows, troubleshoot issues, and maintain system health.

5. Scalability Across Complex Environments

Whether operating in cloud, hybrid, or multi-cloud environments, orchestration tools can scale to handle increasing data volumes and workflow complexity.

6. Better Resource Efficiency

Automated workflows reduce redundant processing and optimize resource use, resulting in lower operational costs over time.

By enabling reliable, automated, and scalable data workflows, data orchestration tools form the foundation of a modern data stack supporting everything from business intelligence to advanced AI use cases.

Top Data Orchestration Tools in 2026 (Expert Picks)

With a rapidly evolving data ecosystem, modern orchestration tools are moving beyond simple scheduling to enable scalable, reliable, and data-aware pipelines. Below are the top 5 data orchestration tools in 2026, along with a more detailed look at what makes each one stand out.

1. Apache Airflow

Apache Airflow is the standard for data orchestration, especially in large enterprises. Originally developed at Airbnb, it introduced the concept of defining workflows as code using Python-based DAGs. This approach gives data engineers full control over pipeline logic, making it highly flexible for complex workflows.

Over the years, Airflow has evolved into a robust ecosystem with strong community support and widespread adoption across industries. It integrates seamlessly with a wide range of data tools, including cloud platforms, data warehouses, and transformation tools.

Key Features:

- Python-based DAG workflows

- Extensive plugin ecosystem

- Scalable scheduling and monitoring

Pros:

- Highly customizable

- Mature and widely adopted

- Strong community support

Limitations:

- Complex setup and maintenance

- Steeper learning curve

- Limited native data awareness

Best For:

- Large enterprises with complex, batch-oriented pipelines and strong data engineering teams

2. Prefect

Prefect is designed as a modern alternative to traditional orchestration tools like Airflow, focusing on simplicity, flexibility, and developer experience. It takes a “developer-first” approach, allowing engineers to build workflows in pure Python without the constraints of rigid DAGs.

One of Prefect’s biggest strengths is its dynamic execution model, which allows workflows to adapt in real time based on conditions and data states. It also offers built-in observability, making it easier to track pipeline performance and debug issues.

Key Features:

- Python-native workflows

- Dynamic pipeline execution

- Built-in retries and caching

- Strong observability and logging

Pros:

- Easy to use and deploy

- Flexible and dynamic workflows

- Excellent developer experience

Limitations:

- Smaller ecosystem than Airflow

- Advanced features in paid tiers

Best For:

- Teams seeking a modern, flexible orchestration tool with minimal complexity

3. Dagster

Dagster represents a shift toward data-aware orchestration, where the focus is not just on tasks but on the data assets themselves. Instead of managing pipelines as a series of steps, Dagster models workflows around data dependencies, lineage, and quality.

This makes it particularly well-suited for modern data platforms where reliability, testing, and observability are critical. Dagster includes built-in features for data validation, type checking, and lineage tracking, helping teams catch issues early and maintain trust in their data.

Key Features:

- Asset-based orchestration

- Built-in data lineage and observability

- Type checking and testing support

Pros:

- Strong focus on data reliability

- Better debugging and testing

- Ideal for modern data stacks

Limitations:

- Learning curve for new concepts

- Smaller community than Airflow

Best For:

- Organizations focused on data quality, governance, and scalable data platforms

4. Astronomer

Astronomer is a fully managed orchestration platform built on Apache Airflow, designed to eliminate the operational complexity of deploying and maintaining Airflow at scale. It provides a production-ready environment with built-in tools for monitoring, scaling, and securing workflows.

By abstracting infrastructure management, Astronomer allows teams to focus on building and optimizing pipelines rather than managing clusters and dependencies. It also enhances Airflow with enterprise-grade capabilities, including CI/CD integration, role-based access control, and advanced observability.

Key Features:

- Managed the Airflow platform

- Automated scaling and monitoring

- Enterprise-grade security and governance

Pros:

- Eliminates infrastructure overhead

- Faster deployment and scaling

- Enterprise-ready features

Limitations:

- Higher cost

- Still tied to Airflow architecture

Best For:

- Enterprises that want the power of Airflow without managing infrastructure

5. Kestra

Kestra is an emerging orchestration platform designed for event-driven, cloud-native workflows. Unlike traditional tools that rely heavily on scheduled batch processing, Kestra is designed to handle real-time events and trigger workflows dynamically.

It uses a declarative YAML-based approach, making it easier to define and manage workflows without extensive coding. Kestra also offers a scalable architecture capable of handling large volumes of tasks with low latency.

Key Features:

- YAML-based workflow definitions

- Event-driven execution

- Scalable, cloud-native architecture

Pros:

- Simple and intuitive workflow design

- Strong support for real-time use cases

- Modern and scalable

Limitations:

- Relatively new ecosystem

- Limited enterprise adoption

Best For:

- Teams building event-driven, real-time data pipelines in modern architectures

These five tools highlight how the data orchestration landscape is evolving from traditional workflow schedulers to modern, developer-friendly, and data-aware platforms.

Comparison Table: Top Data Orchestration Tools

To help you evaluate the right platform for your needs, here’s a side-by-side comparison of the top data orchestration tools in 2026:

| Tool | Best For | Strength | Limitations | Pricing Model |

|---|---|---|---|---|

| Airflow | Enterprise batch pipelines | Highly flexible, large ecosystem, open source | Complex setup, not data-aware | Free (infra cost applies) |

| Prefect | Developer-friendly orchestration | Easy to use, dynamic workflows, strong UX | Smaller ecosystem, paid features | Freemium |

| Dagster | Data-centric organizations | Data-aware, strong testing & lineage | Learning curve, smaller community | Limited to observability use cases |

| Astronomer | Managed Airflow deployments | Fully managed, enterprise-ready | Costly, tied to Airflow | Subscription |

| Kestra | Event-driven, real-time pipelines | Scalable, modern architecture | Less mature ecosystem | Open core |

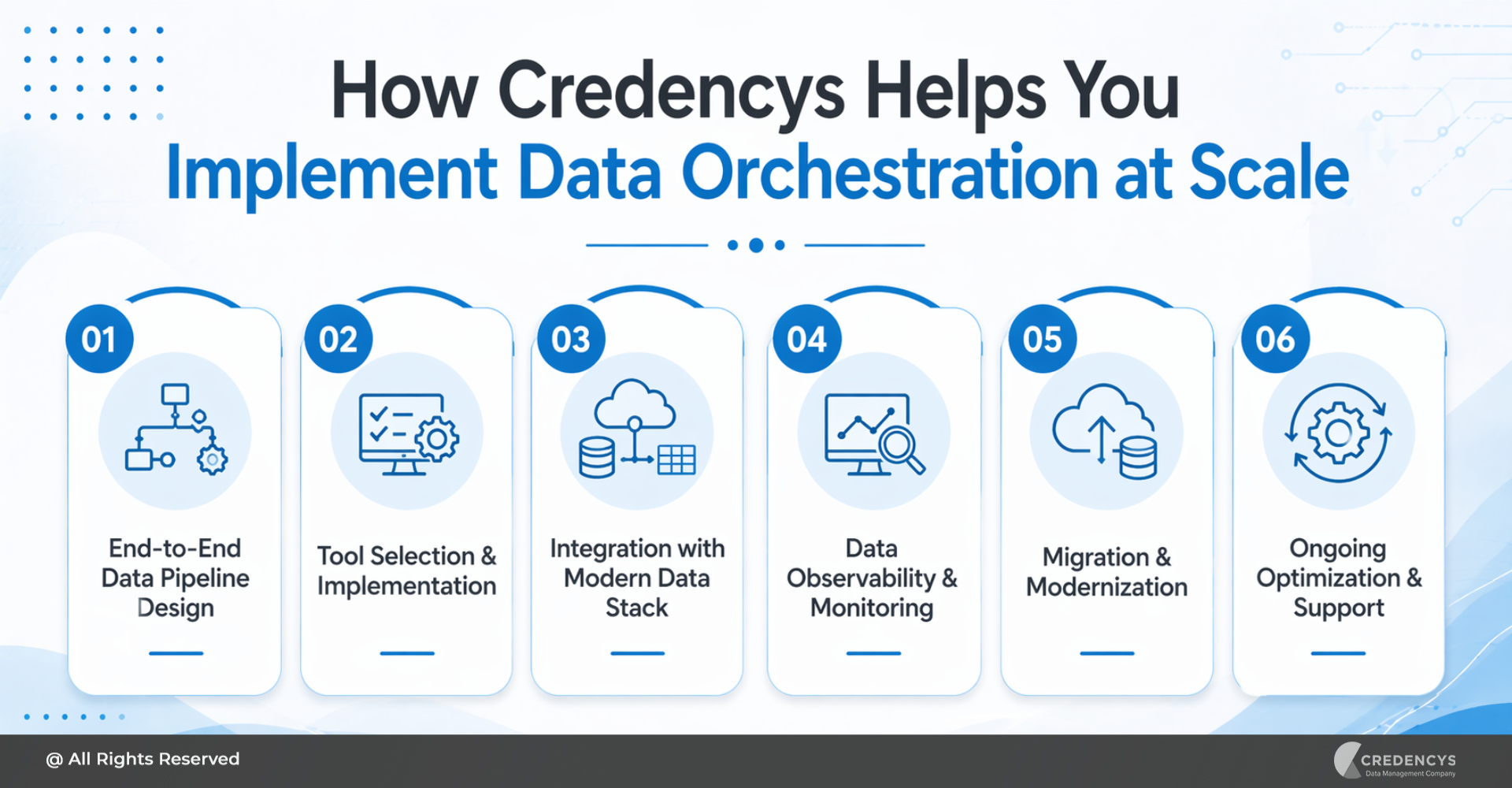

How Credencys Helps You Implement Data Orchestration at Scale

Choosing the right data orchestration tool is only part of the equation. The real challenge lies in designing, implementing, and scaling orchestration workflows that align with your business goals and data architecture.

This is where Credencys brings deep expertise.

1. End-to-End Data Pipeline Design

Credencys helps you design robust, scalable data pipelines tailored to your business needs. From architecture planning to workflow structuring, the focus is on building pipelines that are reliable, efficient, and future-ready.

2. Tool Selection & Implementation

With a wide range of orchestration tools available, selecting the right one can be overwhelming. Credencys evaluates your:

- Use cases (batch, real-time, AI/ML)

- Existing tech stack

- Team capabilities

and recommends the best-fit orchestration platform, followed by seamless implementation.

3. Integration with Modern Data Stack

Credencys ensures smooth integration of orchestration tools with your existing ecosystem, including:

- Data platforms like Databricks and Snowflake

- Transformation tools like dbt

- APIs, streaming platforms, and cloud services

This enables unified and efficient data workflows across your organization.

4. Data Observability & Monitoring

Beyond orchestration, Credencys implements strong observability frameworks to:

- Monitor pipeline performance

- Detect and resolve failures quickly

- Ensure data reliability and trust

5. Migration & Modernization

Still relying on legacy ETL tools or manual workflows? Credencys helps you:

- Migrate to modern orchestration platforms

- Modernize outdated data pipelines

- Adopt best practices for scalability and performance

6. Ongoing Optimization & Support

Data orchestration is not a one-time setup. Credencys provides continuous support to:

- Optimize pipeline performance

- Reduce operational costs

- Scale workflows as your data grows

Final Thoughts

Data orchestration has become a foundational layer of modern data ecosystems. As organizations continue to scale their data platforms and adopt real-time analytics and AI-driven use cases, the need for reliable, automated, and well-orchestrated pipelines is only growing.

While there are several powerful data orchestration tools available in 2026, the right choice ultimately depends on your specific requirements, whether it’s flexibility, ease of use, data awareness, or cloud-native scalability. Each tool brings unique strengths, and selecting the right one requires careful evaluation of your architecture, team capabilities, and long-term goals.

However, it’s important to remember that choosing the right tool is only half the battle. The real value comes from how effectively it is implemented, integrated, and scaled within your data ecosystem.

By combining the right orchestration platform with a strong data engineering strategy, organizations can:

- Ensure consistent and reliable data flows

- Accelerate time-to-insight

- Improve decision-making with trusted data

- Build a scalable foundation for future growth

As data complexity continues to rise, investing in the right data orchestration approach will be key to staying competitive in a data-driven world.

Tags: