Choosing the Right Data Orchestration Strategy for Your Cloud Data Stack

Modern businesses generate vast amounts of data, yet over 68% of it goes unused for analytics, according to IDC.

Why? Because the data isn’t properly orchestrated across systems.

Data orchestration bridges this gap by automating the flow of data across cloud platforms, tools, and teams. It ensures data is clean, timely, and accessible, powering everything from real-time dashboards to AI models.

In this blog, we’ll explore how to choose the right data orchestration strategy for your cloud data stack and how the right choice can unlock speed, scalability, and smarter decision-making.

- The Role of Data Orchestration in Cloud Data Stacks

- Key Considerations When Choosing a Data Orchestration Strategy

- Comparing Popular Data Orchestration Approaches

- Real-World Examples of Effective Orchestration Strategies

- Mistakes to Avoid in Orchestration Strategy

- How Credencys Helps Enterprises Orchestrate Smarter

- Conclusion

The Role of Data Orchestration in Cloud Data Stacks

As organizations move from legacy systems to cloud-native data platforms, managing data workflows becomes significantly more complex. Different teams use different tools.

Data lives across multiple clouds. And business demands real-time insights not static reports.

That’s where data orchestration becomes critical.

What Data Orchestration Does:

- Automates workflows across ingestion, transformation, validation, and delivery.

- Connects disparate systems, cloud warehouses, data lakes, ETL tools, APIs, real-time streams.

- Improves data reliability by ensuring consistency, quality, and timely availability.

- Enables AI/ML, BI, and analytics to work with ready-to-use data at scale.

Without orchestration, businesses face fragmented data pipelines, delays, and duplicated efforts all of which slow down innovation and decision-making. In a modern cloud data stack, data orchestration acts as the central nervous system, keeping everything synchronized, observable, and responsive.

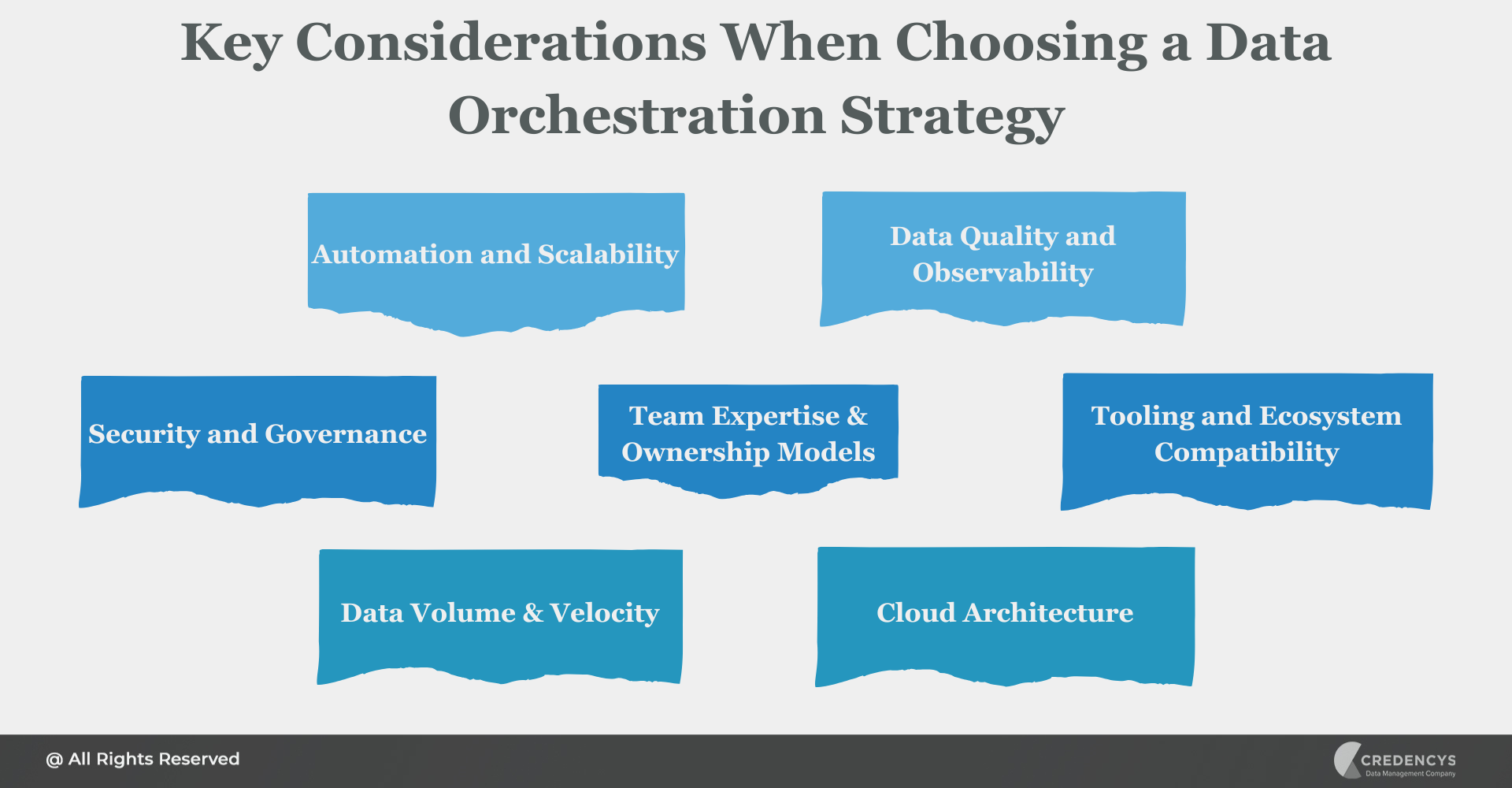

Key Considerations When Choosing a Data Orchestration Strategy

Choosing the right data orchestration strategy isn’t just about picking a tool, it’s about aligning with your infrastructure, data goals, and team maturity. Below are the key factors to evaluate when crafting your orchestration approach:

1. Automation and Scalability

- Will the strategy scale as your data grows?

- Look for dynamic, parameterized workflows, auto-scaling, and retry mechanisms that reduce manual maintenance.

2. Data Quality and Observability

- Can you integrate data validation, monitoring, and lineage tracking into your workflows?

- Orchestration should support automated checks and alerting for failed jobs or inconsistent data.

3. Security and Governance

- Ensure orchestration supports role-based access control (RBAC), logging, and compliance (GDPR, HIPAA, etc.).

- Seamless integration with data catalogs or governance layers like Unity Catalog or Atlan is a plus.

4. Team Expertise and Ownership Models

- Who will own the orchestration; centralized data engineering teams or domain-specific squads?

- Tools like Apache Airflow may suit engineering-heavy teams, while low-code platforms are ideal for business-aligned data users.

5. Tooling and Ecosystem Compatibility

- Does the orchestration layer work well with your data stack? (e.g., dbt, Airbyte, Snowflake, Databricks, Apache Kafka)

- Consider tools that offer out-of-the-box connectors or support for open standards like Python, SQL, YAML, etc.

6. Data Volume and Velocity

- Are you dealing with massive data sets, real-time event streams, or batch processing?

- High-frequency updates require orchestration strategies that support event-driven or streaming pipelines.

- For periodic workloads, cron-based or scheduled workflows may suffice.

7. Cloud Architecture (Single, Multi-Cloud, or Hybrid)

- If you operate in a multi-cloud or hybrid environment, orchestration must support cross-cloud data movement and integrations.

- Ensure your strategy can handle data movement between cloud-native services like AWS S3, Azure Blob, GCP BigQuery, etc.

By aligning your strategy with these dimensions, you’ll build workflows that are not only efficient but also resilient, observable, and future-ready.

Comparing Popular Data Orchestration Approaches

With a growing number of tools and frameworks available, choosing the right orchestration approach can be overwhelming. The best fit depends on your use case, team expertise, and the level of flexibility or abstraction you need.

Below is a quick comparison of the most common types of data orchestration approaches:

| Strategy Type | Best For | Example Tools |

|---|---|---|

| Code-Based Orchestration | Engineering-led teams that need full control | Apache Airflow, Dagster, Luigi |

| Low-Code / No-Code Tools | Fast development, less technical overhead | Prefect, Ascend, Jitterbit |

| Cloud-Native Orchestration | Tight integration with cloud services | AWS Step Functions, Azure Data Factory, GCP Composer |

| Platform-Native Workflows | End-to-end orchestration built into data platforms | Databricks Workflows, Snowflake Tasks |

Ultimately, your orchestration strategy should balance flexibility, ease of maintenance, scalability, and compatibility with your broader data architecture.

Real-World Examples of Effective Orchestration Strategies

To better understand how orchestration strategies come to life, let’s look at how different industries leverage them to drive efficiency, agility, and innovation across their data operations.

1. Manufacturing: IoT-Driven Predictive Maintenance

A global manufacturing firm orchestrates data from sensors on factory equipment to predict failures before they happen.

Strategy Highlights:

- Streaming pipelines pull real-time sensor data into a central lakehouse.

- Orchestration automates data validation, ML scoring, and dashboard refreshes.

- Built using Databricks Workflows and AWS Step Functions.

2. Retail: Real-Time Customer Insights

A large omnichannel retailer uses real-time orchestration to unify data from eCommerce, POS systems, loyalty apps, and inventory systems.

Strategy Highlights:

- Event-driven orchestration triggers workflows upon customer actions (e.g., cart abandonment).

- Pipelines enrich customer profiles in real time for hyper-personalized recommendations.

- Orchestrated across Snowflake, Kafka, and dbt with alerting via Datadog.

3. Supply Chain: Inventory Optimization Across Regions

A distribution company needs to sync data from warehouses, logistics partners, and ERP systems to optimize inventory.

Strategy Highlights:

- Batch orchestration pipelines run hourly to track stock levels and forecast demand.

- Prefect is used for ease of monitoring and retries, with dbt transformations layered in.

- Real-time alerts are triggered when stock falls below thresholds in specific regions.

These examples demonstrate that the right orchestration strategy isn’t one-size-fits-all, it’s about matching workflows to business needs, tools, and team capacity.

Mistakes to Avoid in Orchestration Strategy

Even the most advanced data teams can fall into common traps when designing their orchestration strategy. Avoiding these pitfalls can save time, reduce costs, and prevent data downtime.

1. Ignoring Data Governance and Observability

Orchestration isn’t just about automation; it’s also about trusting your data. Skipping validation, lineage, and monitoring leads to silent failures and unreliable insights.

2. Siloed Ownership and Lack of Collaboration

When orchestration is owned solely by IT or engineering, other teams may lack visibility and agility. A good strategy encourages cross-functional collaboration and enables self-service where possible.

3. Poor Pipeline Maintenance

Once deployed, pipelines often require ongoing tuning, scaling, and dependency updates. Failing to maintain them can lead to brittle workflows that break as your data grows or changes.

4. Overengineering Simple Workflows

Not every use case requires a complex DAG or custom-coded pipeline. Avoid building overly intricate workflows when low-code solutions or native platform features can get the job done faster.

5. Choosing Tools Without a Clear Roadmap

Jumping into orchestration platforms without assessing long-term goals can lead to rework and technical debt. Start with a clear understanding of your data volume, team skillset, and infrastructure needs.

Avoiding these mistakes from the outset ensures your orchestration strategy is built for both scale and sustainability.

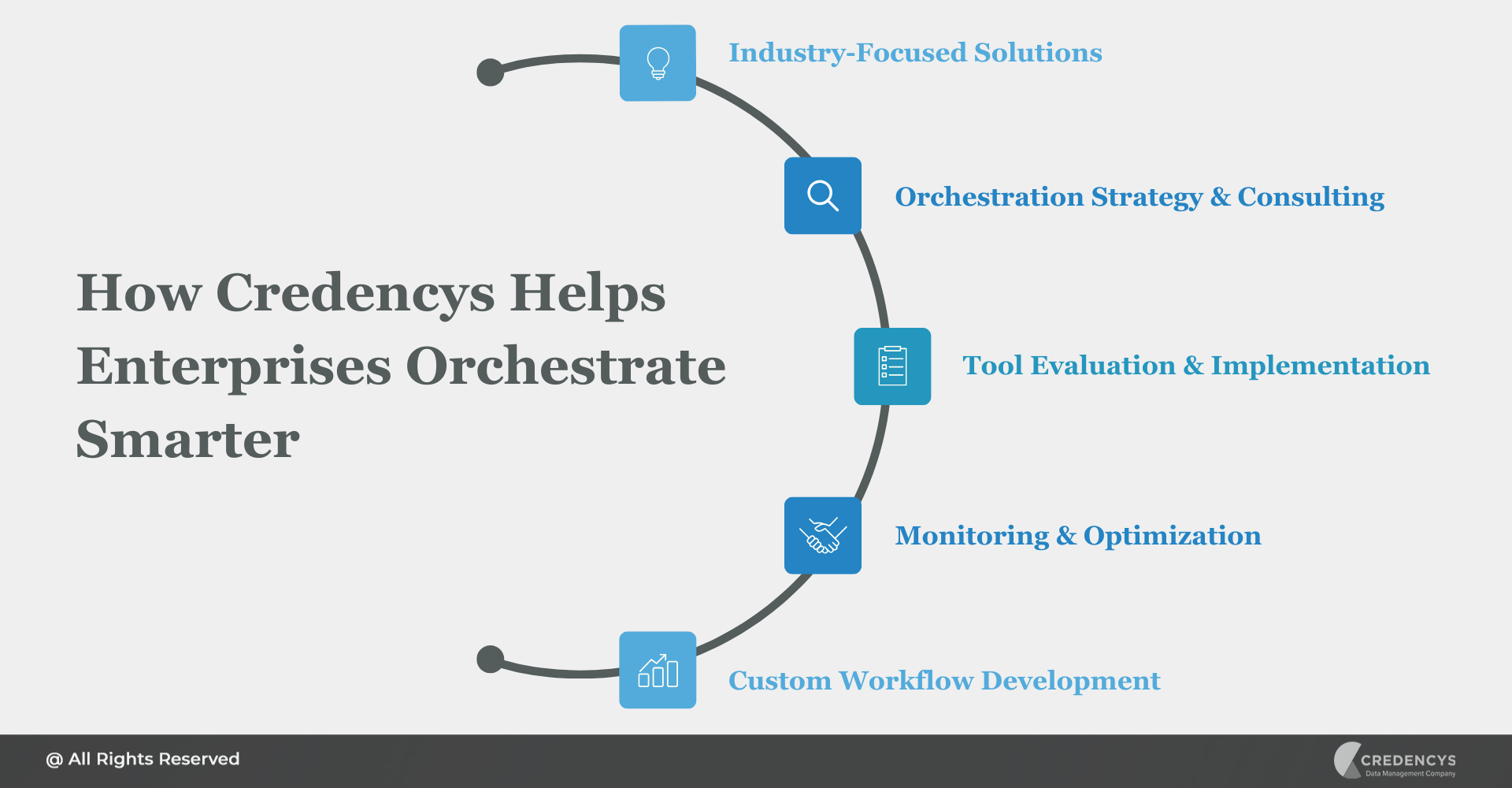

How Credencys Helps Enterprises Orchestrate Smarter

Choosing the right orchestration strategy is just the beginning; execution is where real value is unlocked. That’s where Credencys comes in.

We help enterprises design, build, and optimize scalable, future-ready data orchestration frameworks tailored to their specific goals, cloud infrastructure, and data stack maturity.

Our Expertise Includes:

- Industry-Focused Solutions: Tailored orchestration for Retail, CPG, Manufacturing, Logistics, and more.

- Orchestration Strategy & Consulting: Aligning workflows with your cloud architecture, data volume, and business objectives.

- Tool Evaluation & Implementation: Expertise in Apache Airflow, Prefect, Dagster, Ascend, Databricks Workflows, Snowflake Tasks, and more.

- Monitoring & Optimization: Integrating observability, logging, and alerts to ensure reliable data movement and fast issue resolution.

- Custom Workflow Development: Building robust data pipelines for batch, real-time, and hybrid use cases across cloud and hybrid environments.

With our deep domain knowledge and modern engineering practices, we help organizations go beyond automation to build orchestration strategies that are intelligent, adaptive, and ROI-driven.

Conclusion

A strong data orchestration strategy is essential for any business aiming to scale its analytics, AI, and digital transformation efforts. It’s the engine that ensures your data is not just collected, but delivered, transformed, and activated exactly when and where it’s needed.

From selecting the right tools to aligning with your cloud architecture and business needs, thoughtful orchestration unlocks faster insights, higher data quality, and smarter decision-making.

Tags: