Databricks Cost Optimization Best Practices for 2026 and Beyond

Why does your Databricks bill keep growing?

According to cloud cost management reports, over 30% of cloud spend is wasted due to inefficient usage, and analytics platforms are among the biggest contributors as data volumes, users, and AI workloads scale.

Databricks is no exception. What starts as a flexible, high-performance analytics platform can quietly turn into one of the most expensive line items on your cloud bill.

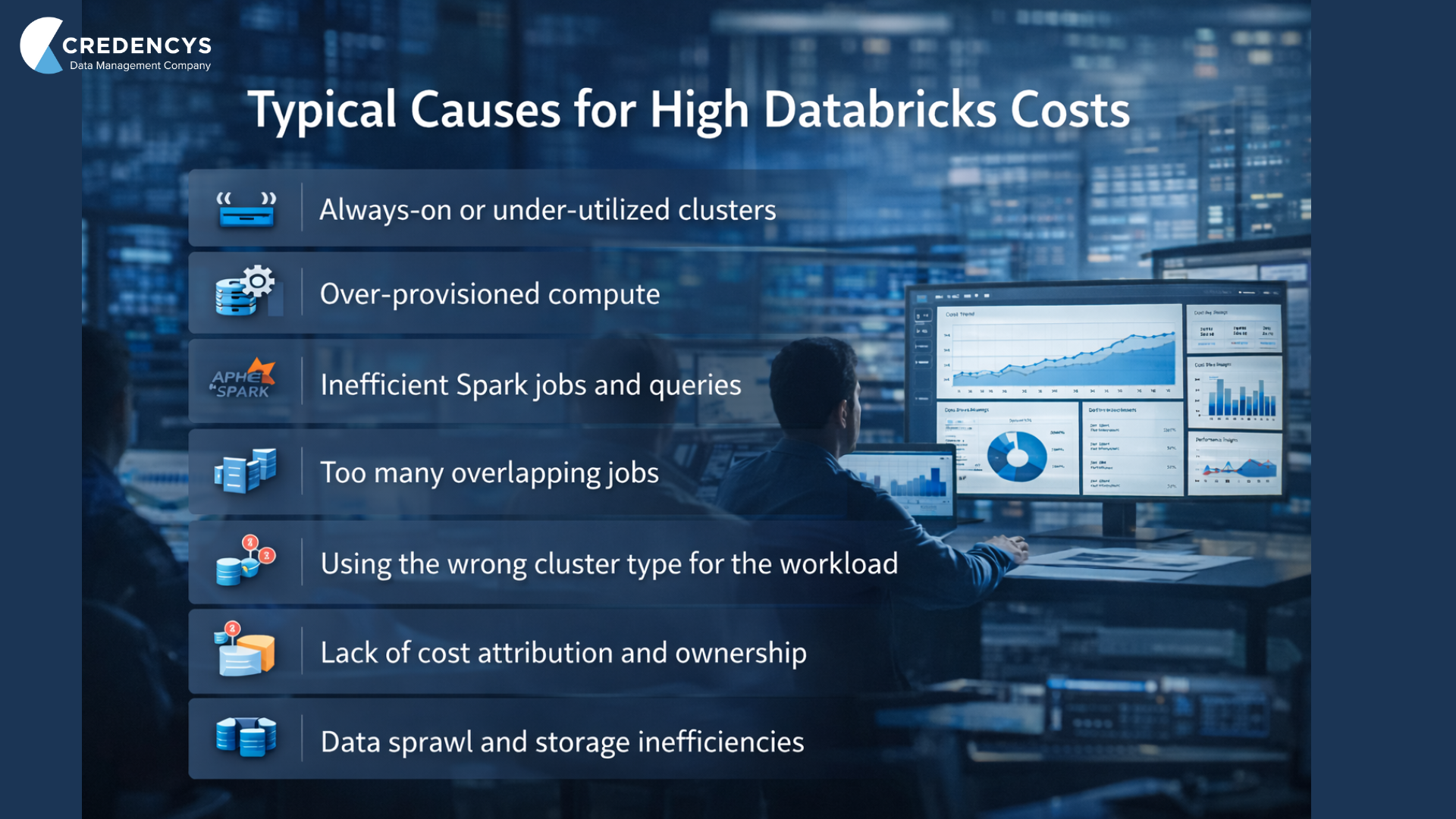

The challenge is not that Databricks is “too costly” by default. The real issue is how compute, jobs, clusters, and teams use it over time. Idle clusters, over-provisioned resources, inefficient Spark jobs, and a lack of cost visibility compound month after month, often without anyone noticing until finance flags the spike.

That’s why Databricks Cost Optimization in 2026 and beyond is less about cutting corners and more about engineering discipline, visibility, and governance at scale. When done right, organizations don’t just reduce spend, they unlock faster performance, better accountability, and higher ROI from the same platform.

Databricks Cost Optimization: How Does it Work?

At a high level, Databricks cost optimization is about controlling how compute resources are consumed and ensuring every DBU spent delivers real business value.

Databricks pricing is usage-based. You pay for compute while clusters are running, jobs are executing, and workloads are processing data. Storage and cloud infrastructure add to the bill, but compute remains the primary cost driver for most organizations.

This means optimization does not start with discounts or negotiations. It starts with how your platform is designed and used every day.

Here is how Databricks cost optimization works in practice.

First, it focuses on visibility.

You cannot optimize what you cannot see. Teams need clear answers to simple questions:

- Which jobs consume the most DBUs?

- Which teams or projects drive the highest spend?

- How much compute is actually doing useful work versus sitting idle?

Once visibility is in place, optimization shifts to behavior and configuration. This includes how clusters are sized, how long they run, and how workloads are scheduled. Small configuration decisions made early often multiply into high monthly costs at scale.

Another key layer is workload efficiency.

Two Spark jobs can produce the same output, yet one may cost several times more due to inefficient joins, poor partitioning, or unnecessary data scans. Optimizing code and execution patterns directly lowers runtime and DBU usage.

Finally, effective Databricks cost optimization introduces governance and accountability.

When teams can see the cost impact of their workloads, behavior changes naturally. Optimization stops being a firefighting exercise and becomes part of normal engineering practice.

Best Practices for Databricks Cost Optimization

Strong Databricks cost optimization comes from a mix of platform visibility, technical efficiency, and team behavior. No single tactic works on its own. The biggest gains happen when these practices reinforce each other over time.

Below is a blended, practical set of best practices that work well for teams operating Databricks at scale.

1. Monitor Usage Continuously with the Right Tools

Cost optimization starts with visibility. Use Databricks system tables to track DBU consumption, cluster runtime, and job-level usage. This helps identify which workloads and teams drive the highest costs.

For deeper insights, many organizations integrate external cost tools to break down spend by team, project, or environment. Frequent monitoring also makes it easier to spot idle clusters, runaway jobs, or sudden usage spikes before they turn into month-end surprises.

2. Right-size Clusters for Real Workloads

Over-sized clusters waste money. Under-sized clusters waste time. Both increase costs. Review actual CPU, memory, and runtime metrics instead of relying on default or “safe” configurations.

Lightweight reporting jobs and simple ETL pipelines rarely need large clusters. Heavier analytics or ML workloads might, but only during peak execution. Revisit cluster sizing regularly as data volumes and usage patterns change.

3. Use Autoscaling with Clear Boundaries

Autoscaling works best when limits are set thoughtfully. Define realistic minimum and maximum worker counts so clusters do not scale more than necessary. This helps balance performance and cost without sacrificing reliability.

4. Enable Auto-Termination on all Interactive Clusters

Idle clusters are one of the most common sources of wasted DBUs. Auto-termination shuts down clusters after a defined period of inactivity. Even saving one or two idle hours per cluster can translate into meaningful monthly savings at scale.

5. Prefer Job Clusters for Scheduled Workloads

For recurring pipelines and production jobs, job clusters are usually more cost-efficient than shared interactive clusters. They start when needed, run the job, and shut down automatically. This eliminates idle compute and keeps execution environments clean and predictable.

6. Schedule Heavy Jobs During Off-peak Hours

Many cloud providers offer lower rates during off-peak windows, such as nights or weekends. Running predictable, resource-intensive workloads like nightly ETL or batch analytics during these periods can significantly reduce overall cost. Staggering jobs also prevents unnecessary concurrency that forces Databricks to scale aggressively.

7. Optimize Queries and Spark Jobs to Reduce Runtime

Infrastructure tuning alone is not enough. Poorly optimized queries can consume excessive compute. Focus on efficient query design using proper partitioning, caching where appropriate, and avoiding unnecessary full-table scans. Reducing job runtime directly lowers DBU consumption and often improves reliability.

8. Keep Databricks Runtimes Up-to-Date

Databricks runtimes include performance improvements, better memory handling, and more efficient execution engines. Regular upgrades help reduce compute time without changing workloads, resulting in immediate cost savings.

9. Optimize Data Storage and Access Patterns

Unused or obsolete data increases processing costs when scanned repeatedly. Clean up old datasets, remove duplicates, and use efficient formats like Delta Lake to reduce read and write overhead.

Implement data retention policies to automatically remove outdated data. This controls cost while supporting governance and compliance goals.

10. Create Cost Awareness Across Teams

Databricks cost optimization cannot live only with the platform or the finance team. Train engineers, analysts, and data scientists on how DBUs are consumed and how their design choices affect cost. When teams can see the impact of their workloads and share cost-saving practices, optimization becomes part of everyday development rather than a reactive exercise.

Databricks Cost Optimization: Case Study

About the Client

A large global enterprise running high-volume analytics and reporting workloads on Databricks as part of its cloud data platform, supporting multiple business-critical use cases across teams.

Key Challenges

The client faced rapidly increasing Databricks cloud costs, long workflow execution times, and inconsistent performance. A lack of standardization across Spark pipelines, inefficient resource usage, and limited governance made it difficult to control spend without impacting scalability and reliability.

Solution Implemented

Credencys conducted a deep technical assessment of the Databricks environment, optimized Spark jobs, and redesigned infrastructure usage based on workload criticality. The solution combined Spark performance tuning, smarter cluster and instance selection, workflow prioritization, and the implementation of Unity Catalog to embed governance and visibility into the platform.

Business Impact

- 90% reduction in Databricks cloud processing costs

- Workflow execution time reduced from 2 hours to 10 minutes

- Improved reliability and scalability for critical analytics workloads

- Stronger governance, access control, and metadata management using Unity Catalog

Summary

Databricks offers immense flexibility and scale, but without the right discipline, costs can rise faster than the value delivered. As data volumes grow, teams expand, and AI workloads become more common, cost optimization is no longer optional. It is a core part of running Databricks responsibly.

Effective Databricks cost optimization goes beyond reducing spend. It brings clarity into how resources are used, improves performance across workloads, and creates accountability across teams. When visibility, efficient engineering practices, and governance work together, cost control becomes sustainable rather than reactive.

Organizations that treat cost optimization as an ongoing practice, not a one-time exercise, are better positioned to scale analytics and AI without financial surprises. With the right approach and the right partner, Databricks can continue to power innovation while keeping costs predictable and aligned with business outcomes.

Tags: